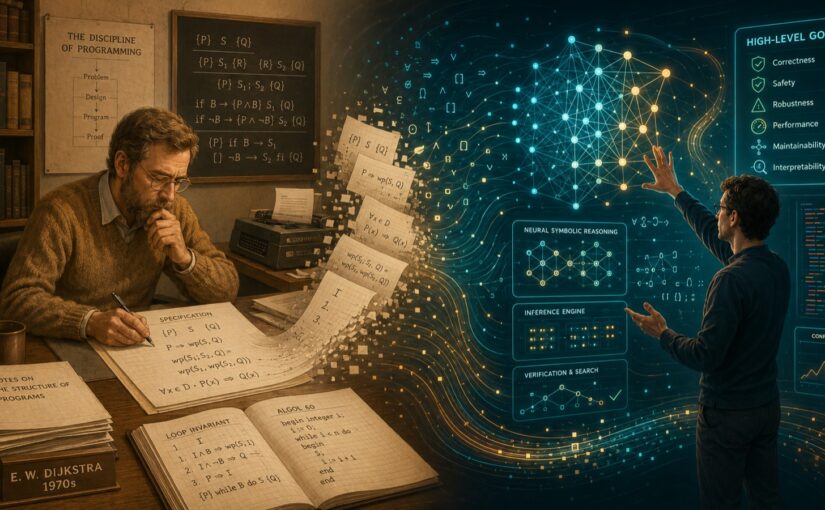

In 1978, Dijkstra wrote a famous short essay:

"On the Foolishness of 'Natural Language Programming.'"

His point: the biggest problem with natural language isn't that it's hard to understand. It's that it's far too easy to believe you've been clear.

Forty-eight years later, the era of large models has arrived.

And suddenly, many people are discovering—

Wait. Was Dijkstra... right?

AI writes code, but requirements always slip. Long contexts cause drift. "Close enough" eventually turns into "nowhere close."

So the whole industry starts frantically piling on:

specs, tests, guardrails, harnesses, CI/CD, agent protocols...

It looks like we've come full circle, right back to "formalization."

But I'm increasingly convinced:

Most people still haven't grasped the real trend.

Because they assume:

humans will continue to drive the formalization process in fine detail, forever.

And that may just be a transitional phase.

The real shift is this:

Formalization isn't going away. But the entity doing the formalizing is changing — from humans to machines.

In the past:

you had to write everything with extreme rigor yourself.

Because old computers were "brittle."

Single execution. No reflection. No feedback loop. No dynamic correction. Ambiguity in natural language meant instant catastrophe.

So in that era:

formalization was a burden humans had no choice but to bear.

But today's large model systems are different.

They're not:

"natural language → compiler."

They are:

natural language → reasoning → trial and error → environmental feedback → self-correction → testing → iteration.

This is no longer traditional program execution.

It's a dynamic closed-loop system.

So:

ambiguity is fatal for one-shot execution.

But for a system with feedback, loops, iteration, and final verification—

ambiguity may not be a problem.

Humans have always worked this way.

Kids don't learn language through formal grammar. Startups don't run meetings via type systems. Couples don't chat in protocol buffers.

Everyone relies on:

saying something wrong, and the world giving feedback.

So the real transformation of the AI era may not be:

"natural language replaces formalization."

It's:

machines begin to shoulder more and more of the formalization work for humans.

Many of today's best practices—

elaborate specs, cumbersome processes, excessive manual review, even certain ritualized software engineering ceremonies—

may, like hand-coded assembly of a previous era,

gradually recede into "compilation-layer details" handled automatically by machines.

Humans return to:

goals, direction, aesthetic judgment, value decisions.

Machines handle:

compressing vague intentions into precise execution.

This may be the one thing Dijkstra never saw coming.