A Scholarly Family

Ever since Qu Yuan, Chinese literati have been fond of tracing their ancestry to illustrious roots — "descendant of Gaoyang the Divine Emperor" and such declarations — to signal their noble bloodlines. When I was compiling and editing A Collection of Master Li's Posthumous Writings: Prefaces, I came across this passage in the first piece, "Preface to the Li Family Genealogy," explaining the origin of the family name Li:

"The forerunners of the Li clan, surnamed Ying, traced their descent from Gaoyang of the Zhuanxu lineage. One descendant, Gao Yao, served as Grand Justice (Dali) under Emperor Yao, and the family adopted 'Li' (理, meaning 'principle' or 'justice') as their surname from the title. During the reign of King Zhou of Shang, a descendant named Li Zhen fled with his mother to the lost land of Yihou. Starving, they survived by eating plums (李, li) from the trees. To evade King Zhou's persecution, they changed their surname from 理 (Justice) to the homophonous 李 (Plum), and their descendants have borne this name ever since."

In my earlier, more perfunctory readings of the Posthumous Writings, I had mostly skipped Master Li's abstruse classical prose, drawn instead to the more accessible "modern writings" of my two granduncles in the appendix. As a result, I never registered this origin story. But my daughter once asked me: "Dad, you said our family name Li means plum — how come? Does that mean we Li family like plums in particular?" I had no idea whether the surname Li was actually connected to the fruit, so I dodged the question and told little Tiantian instead that statistically, Li had risen to become the most common surname in China — and perhaps the world. Even in our tiny Buffalo office there were two Uncle Li's — one of Korean descent. But eight hundred years ago, we were all one family.

Master Li's own account of this family history — the fall from officialdom, the change from 理 to 李, the "pointing at the tree and taking its name" — struck me as too sparse. So I searched online and found a fuller treatise, On Gao Yao, Blood Ancestor of the Li Surname. It turned out that the primogenitor Gao Yao served Emperor Yao and Shun as Grand Justice — a minister of incorruptible integrity, whose achievements in statecraft were so esteemed that Emperor Shun personally named him his successor. Even Confucius honored him as one of the Four Sages of antiquity. In ancient China, officials took their office titles as surnames, hence 理氏 (the Li of Justice). Tragically, Sage Gao Yao died before ascending the throne. Generations later, under the depraved King Zhou of Shang, a descendant named Li Zheng served as Grand Justice with the same upright character — and for his honesty, the debauched king had him executed. His wife Qihe fled with their young son Lizhen to the lost land of Yihou (in present-day Henan). Starving, they spotted fruit on a tree and ate to survive. Afraid of the king's pursuers, Lizhen dared not keep the surname 理. In gratitude for the "wood-seed" (muzi, 木子 — the character parts that combine to form 李) that saved them, he changed the family name to Li. From this seed, the Li lineage — the largest family name under heaven — branched and flourished across generations.

I told my daughter: not only do we come from a scholarly family, we are the direct descendants of Sage Gao Tao himself.

Master Li — Li Xiansheng, courtesy name Xuexiang — was my great-grandfather. A Collection of Master Li's Posthumous Writings, compiled in vernacular classical Chinese (also called "modern classical style"), gathers his surviving works — poems, lyrics, celebratory couplets, elegies, prefaces, and miscellaneous essays — transcribed by his disciples and privately published in the 1930s.

The Posthumous Writings also includes works by my two granduncles: elder granduncle Li Yingwen and younger granduncle Li Yinghui. My great-grandfather was exceptionally open-minded about education, selling off family land to send his sons (my granduncles) to study in Japan. My own grandfather (Li Yingqi, the second son), however, was kept at home to manage the family estate, forfeiting the chance for overseas education. It's said that every year, my grandfather would travel to Nanjing to remit money from land sales to his two brothers in Japan. In the early 1920s, the two granduncles returned with law and political science degrees from Meiji University — rare credentials for that era, and a springboard for significant careers. That their subsequent achievements remained relatively modest (disproportionate to their education) and confined to the local sphere, I attribute to three factors: first, the times were harsh, with China in ceaseless turmoil from war and upheaval throughout the early 20th century; second, my great-grandfather was indifferent to fame and fortune, urging his children to carry on the family mission of local education rather than venture into the wider world; third, both granduncles suffered from poor health — they lacked the physical constitution for "revolution." Elder Granduncle Yingwen was bedridden for years, and it was country life that gradually restored his health. Younger Granduncle Yinghui died tragically young. Yet their writings reveal open minds deeply engaged with the issues of their day. Besides rustic pastoral pieces like "Li Yingwen — Elegy for a Dead Dove," they also produced fiery patriotic works, such as "Li Yinghui — Manifesto of the Anti-Japanese Association (Modeled on the Denunciation of Empress Wu)" and "Li Yingwen — Preface for Wang Joining the Volunteer Army."

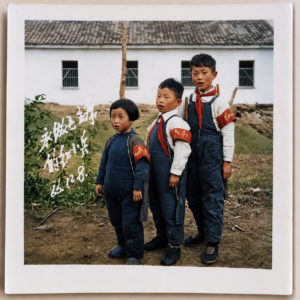

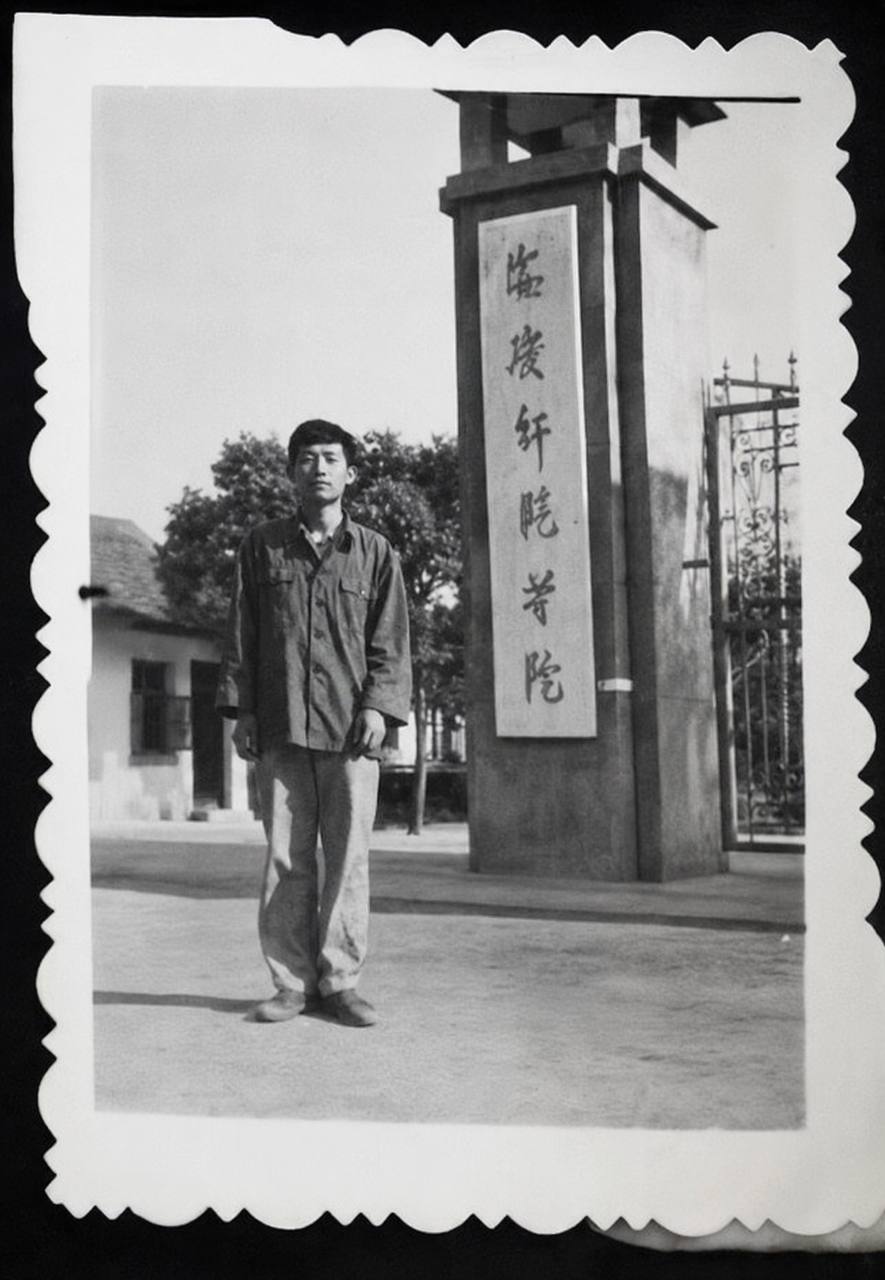

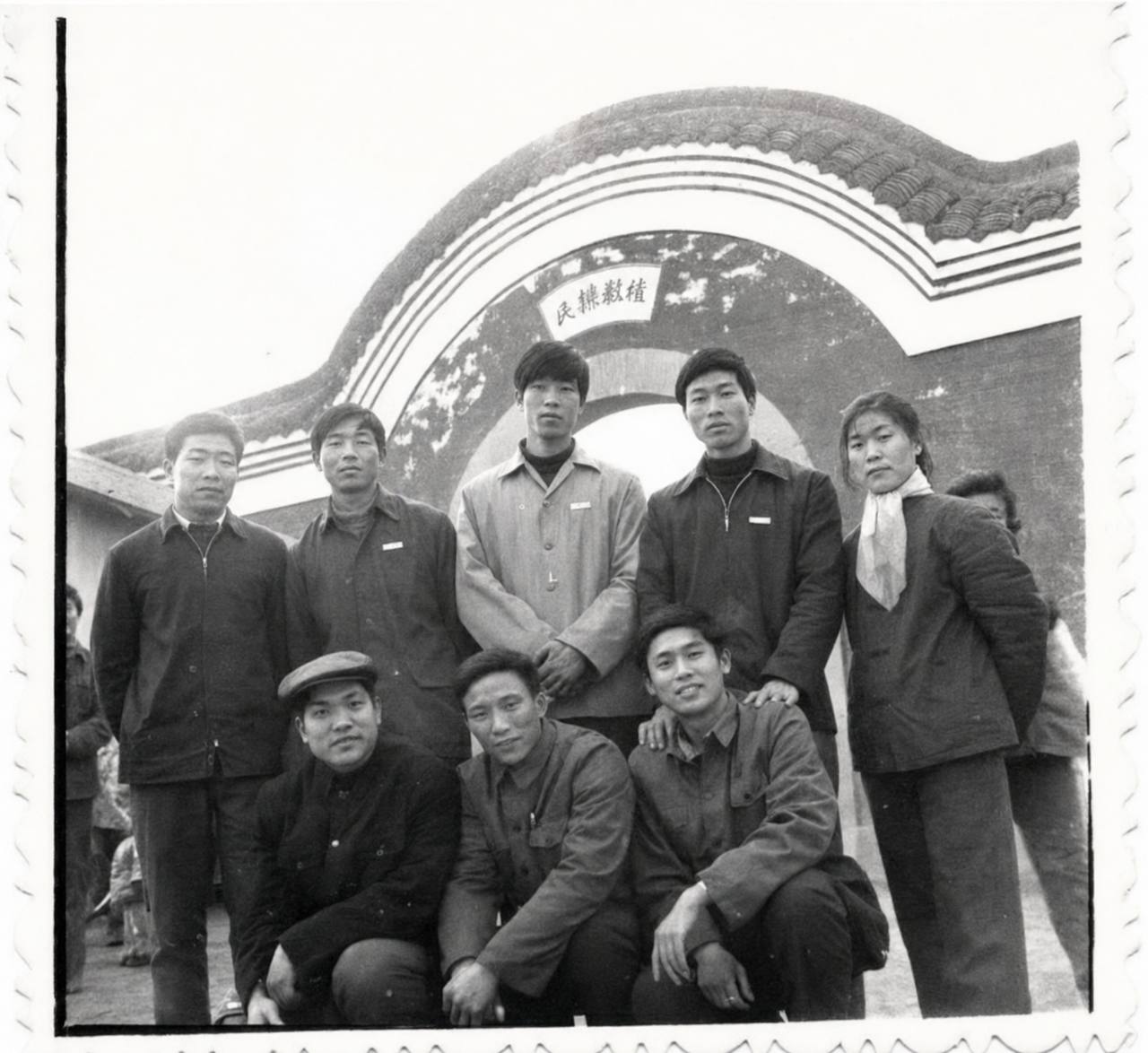

My grandfather died in the great famine of my birth year — a calamity that was three-tenths natural disaster, seven-tenths man-made catastrophe. Among the three brothers, only Elder Granduncle Yingwen was fortunate: he passed away peacefully at home in 1965, surrounded by every Li family descendant who had gathered for a grand funeral (see the family photograph below). I still remember each of us grandchildren, after the coffin was lowered, taking turns to scoop up a handful of yellow earth. As an enlightened gentry figure and a "united front target," Granduncle Yingwen had been treated with courtesy by the local government and was even elected as a county representative to the People's Congress, thus escaping the reach of political campaigns. That he departed this world the year before the Cultural Revolution began was an even greater stroke of fortune — otherwise, given the complexity of his personal history, he would have suffered terribly in that great upheaval. My maternal grandmother, who raised us through those years, was dragged out and struggled against during the Cultural Revolution, forced to wear a "Landlord Element" placard every day, subjected to humiliation that cast a lasting shadow over our childhood.

The above accounts for my "scholarly family" background — except that by my father's generation, the family fortune had seriously declined. Beset by foreign invasion and civil war, the country was in chaos, and life grew harder each day. My father often went hungry and cold as a child. In its heyday, the Li family's Chongshi Academy had enjoyed wide renown, its students scattered across the land like peaches and plums filling the world. Yet this decline proved a hidden blessing: when the Land Reform came, our family was classified as "Small-Scale Land Lessors" rather than one of the "Four Categories" (landlords, rich peasants, counter-revolutionaries, and bad elements — later expanded to include "rightists" designated in 1957). This spared us, the younger generation, from the brunt of political persecution.

The matter of "Small-Scale Land Lessor" classification carried its own stories. When we were children, family class status was an all-important political label: children of "poor and lower-middle peasants" were considered born revolutionaries with "red roots and upright shoots," innately superior. Children of the "landlord, rich peasant, counter-revolutionary, bad element, and rightist" classes faced extreme social discrimination — denied opportunities for factory jobs, schooling, and more — and suffered constant bullying in daily life. I remember a girl in our elementary class who came from a landlord family; she cut such a pitiable figure, never able to hold her head up, yet classmates still taunted her relentlessly. In such an environment, we were all acutely sensitive about our family background. My own family situation was precarious: my mother was born into a landlord family — a pitiable sort of landlord, really; my maternal grandfather had saved every penny from a small business, denied himself fine food and clothing, tightened the whole family's belts, and poured everything into buying land in hopes of modest prosperity — and in return won a landlord label. This became a fiercely guarded family secret. Fortunately, a child's class status followed the father, so every time we filled out a form, the "family class" box read "Small-Scale Land Lessor." The problem was, for a long time, we had no idea what this obscure, tongue-twisting classification actually meant politically, which left us perpetually anxious. I remember classmates discussing our strange class label. One self-proclaimed authority declared: "Small-Scale Land Lessor — that means little landlord!" (It wasn't that far off, actually.) And with that, we were suddenly shoved into the camp of "class enemies," utterly mortified. My cousin suffered the same anxiety. Then one day, he announced triumphantly that, after deep research — studying Chairman Mao's works and relevant Party policy documents — he had discovered that "Small-Scale Land Lessor" was essentially equivalent to "Upper-Middle Peasant," which placed us squarely among the "united objects" of the revolutionary ranks. What's more, Chairman Mao himself came from an upper-middle-peasant family. These momentous findings brought us immense relief.

The old family home in Keshan — I visited it as a child, when my cousin led us up the mountain; it felt like the Mountain of Flowers and Fruit, remote and secluded. A few years ago, on a trip home to China, my brother drove us back there. It remains a forgotten corner to this day — a single mountain road, bumpy and dusty, narrowing to barely a car's width as you approach. My ancestors must have chosen this Jiangnan hillside deliberately, building their grand compound in a spirit of retreat from the world, carving out their own Peach Blossom Spring. My father's memoir, Decades Through Wind and Rain, contains a vivid description of the family school there:

A Glance Back at the Old Residence

A deep courtyard mansion, antique and elegant, nestled against the mountain and facing a stream, oriented east to west. Above the main gate, the couplet "The Nation's Grace, the Family's Joy / May Men Live Long, May Years Bring Harvest" stood steadfast through the seasons. The main quarters comprised five large rooms in the front row and five in the back, joined in the middle by three open-air courtyards flanked by two wings on either side. The three rows, each two stories high, formed an integrated whole. Upstairs, a continuous corridor circled the entire compound — a gallery on which one could stroll freely. To the left stood two "new rooms"; to the right and rear, a row of auxiliary quarters. The front courtyard, with its large and small gates, contained seven flower terraces, where pines and cypresses complemented one another, blossoms clustered in splendor, and fruit filled the air with fragrance. Among the flowers: plum, chrysanthemum, osmanthus, rose, briar, and sacred bamboo. Among the fruits: persimmon, peach, apricot, plum, and jujube. Every doorway was flanked by stone drums and lions; the courtyards were paved in marble. The bricks and tiles were custom-fired in the family's own kiln, of the highest quality; the timber, first-rate, was floated down the river from Jiangxi on rafts — a testament to the master-builder's meticulous vision. The upper floor of the main building served as classrooms and student dormitories; the lower floor and the "new rooms" were the family living quarters; the foot-house housed the wine-making workshop, kitchen, and firewood store.

书香门第

中国文人自屈原始,就喜欢炫耀自己的祖上,"帝高阳之苗裔兮"云云,以示自己根正苗红,血统高贵。早年整理校对《李老夫子遗墨:序类》,第一篇"李氏创修宗谱序"也提到"李氏"的来源如下:

"按李氏之先,自嬴姓顓頊高阳氏曰皋陶者,为堯大理官,始为理氏,至殷紂时有曰利贞者,偕母逃难于伊候之墟,食李实以全生,复改理为李,子孙因以为氏焉。"

以前读《李老夫子遗墨》比较偷懒,基本跳过晦涩难懂的李老夫子正文,而对《遗墨附录》中更贴近近現代生活的两位叔爷的"时文"感兴趣,因此对这段"李氏"来源的掌故没有印象。女儿小时候问我:"Dad, you said our family name Li means plum, how come? Does that mean we Li family like plums in particular?" 我当时不知道"李氏"跟李子到底有沒有关联,只好顾左右而言他,告诉甜甜,据最新统计,"李氏"似乎已经上升到中国的(可能也是世界上的)第一大姓,就連小小的水牛城辦公室就有兩位 Uncle Li's, 其中一位還是朝鮮族裔,但八百年前都是一家人哪。

李老夫子對祖上家道中落、改"理"為"李"、"指树为姓"的歷史,撰述失之簡陋。于是上網進一步搜尋資料,查得《李姓血祖皋陶并李姓考》。原來,李家的始祖皋陶在堯舜時代就任國家重臣"大理官"(司法部長),清明正則,功勛卓著,經邦緯國,英名蓋世,舜帝親立為接班人,甚至孔夫子也拜其为上古"四圣"之一。古人以官为氏,因称理氏。所惜圣皋陶帝業未举而病亡。至商紂王朝,圣皋陶的后代理征仍繼任理官,正直清廉,为荒淫昏庸的纣王所不容,终遭殺身之祸。于是,"理征的妻子契和氏带着幼子利贞逃了出来,奔于伊候之墟(今河南境内),饥饿不堪,见一树上结有果实,便采了来吃,母子得以活命,其后,利贞畏于纣王的追捕而不敢姓理,于是以'木子'救命之恩,改称李氏"。天下第一大姓李氏由此而宗派繁衍,生生不息。

我告訴女儿:咱們非但出身書香門第,還是大圣人皋陶的传人呢。

李老夫子(李咸昇,號學香)是我的曾祖父。《李老夫子遺墨》(現代文言,又稱"時文")收集了其徒子徒孫傳抄的李老夫子遺作,包括詩詞歌賦、喜壽輓聯、序傳雜文等,由他的門生編輯成冊,內部發行於上個世紀三十年代。

《遗墨》還收錄了我的兩位叔爺(伯祖父李應文和叔祖父李應會)的作品。曾祖父非常開明,重視教育,不惜變賣田產送孩子(我的叔爺)去日本留學深造。但我的爺爺(李應期,行二)被曾祖父留下來幫助理家,失去了留學機會。據說,我爺爺當年每年去南京一趟,將家產變賣的銀子匯款到日本,供給兩個兄弟的學業。兩位叔爺上個世紀二十年代初分別獲得明治大學法學士和政學士學位歸國。在那個年代,有這樣教育背景的人才很難得,本可做一番大事業。他們後來的建樹不大(與其教育水平不成比例),影響止於本地,我猜想原因有三:一是年代不濟,中國自上世紀初開始,兵荒馬亂不斷;二是曾祖父淡泊名利,進而要求孩子們繼承父業,在家鄉興辦教育,而不是鼓勵孩子們出去闖天下;三是兩位叔爺身體都不大好,沒有"革命"的本錢:伯祖父久卧病榻,是鄉間生活使他休養生息,逐漸康復;叔祖父更是不幸,英年早逝。但是從他們所著文字,可以看出,他們思想開明,關註時事。除了鄉居閑篇如"李應文-哀死鴿文"外,也不乏豪情熱血之作,如"李應會-抗日會宣言(仿討武曌檄)","李應文-王君加入義勇軍序"。

我爺爺在我出生那年死於三分天災、七分人禍的大飢荒。三兄弟中,就數伯祖父李應文比較幸運,1965年在老家壽終正寢。李家所有晚輩全部到齊,舉行隆重葬禮(李家合影見下)。還記得我們孫兒輩,在棺柩落地後,每人輪流掬一捧黃土。伯祖父生前作為開明紳士和"統戰對象",受到當地政府的禮遇,曾經當選為縣人大代表,幸免於政治運動的波及。仙逝於文革前一年,更是大幸,否則,以他歷史上的複雜經歷,大革命中少吃不了苦頭。一直看顧我們長大的外祖母在文革中,就被揪鬥,每日掛著"地主分子"的牌子,受盡羞辱,給我們幼小心靈蒙上陰影。

以上可算是我的"書香門第"背景。只不過,到我父親這一輩,由於國家內憂外患(抗日和內戰),連年戰亂,家道中落,生活日漸艱難。我父親小時候忍飢挨凍的事常有。想當年,李家"崇實學校"在當地可是富有盛名,桃李滿天下。不過,家道衰落倒成為一件好事:土改的时候,家庭由此被定為"小土地出租",而不是"地主"、"富農"這樣的"四類分子"(指的是地主、富農、反革命、壞分子四類,後來又加上57年劃分的"右派"),使得我們後輩少受政治運動的衝擊。

說到家庭成分"小土地出租",還有一些故事。在我們小時候,家庭成分是一個很重要的政治標簽:"貧下中農"子弟被認為天生革命,"根正苗紅",高人一等;而"地、富、反、壞、右"子弟受到極端的社會歧視,被剝奪很多機會(招工、上學等),而且日常生活中也常常受欺侮。還記得我們小學時班上有一個女生,家庭出生地主,很可憐的樣子,總是抬不起頭。就這樣,還常常有同學羞辱她。在這樣的環境裡,我們每個人對家庭出身自然很敏感。我家情況不是很妙,母親出身地主(是個可憐的土地主:外祖父做小生意賺了點錢,捨不得吃和穿,一家人勒緊褲腰帶,卯足勁置辦田產,以期小康,換來了一個地主帽子),成了我們的一個死守的秘密。好在子女家庭出身隨父,所以我們每次填表,家庭成分欄都是"小土地出租"。問題出在,很長一段時間,我們搞不清這個比較偏僻拗口的成分的政治含義,心裡不免惴惴。記得在班上,有幾個同學議論我家這個奇怪的出身,其中一個自作聰明地說:"小土地出租,就是小地主"(其實這個理解不算離譜),一下子把我們推到"階級敵人"陣營,讓我們無地自容。我的堂兄也有同樣的煩惱。有一天,他很高興地宣佈,經過深入研究,學習毛主席著作和有關黨的政策文件,發現"小土地出租"大體相當於"上中農",屬於革命隊伍的團結對象。而且黨的主席毛澤東也出身於上中農家庭。這些偉大發現使我們大大松了一口氣。

磕山老家,小时候去过,堂兄还领着爬山,感觉是个花果山,僻静偏远。几年前回国探亲,哥哥开车带我们又去了趟老家,至今仍是被遗忘的角落,一条山区小路,颠簸起伏,尘土飞扬。临近老家,山路狭窄到勉强可以过一辆车。当年,先辈择此江南山地而居,大兴土木,大约很有些躲避尘世,开辟桃园的想法。老爸的回忆录《风雨几春秋》中对老家的李家学堂有生动记述:

故居回眸

深宅大院,古色古香,依山面溪,坐东朝西。大门上"国恩家庆,人寿年丰"对联经年常在。正房是前后各五大间,中间一排由三个天井和两边二个厢房组成。这样前后三排,上下两层,构成一体。楼上形成环状贯通的走马楼,左边有两间"新屋",右边及后面是一排裙屋。前面院子,有大小院门,院内七个花台,松柏相衬,花簇绵秀,果实飘香。花有梅、菊、桂、及玫瑰、蔷薇、天竹;果有柿、桃、杏、李、枣等。所有大门均有石鼓、石狮,天井是大理石铺成。建房的砖瓦是自家建窑特制,质量堪称上乘;木材取自江西,放排顺江而下,更是一流,足见主事者之匠心。正屋楼上是教学场所和学生宿舍,楼下和"新屋"是家人生活区,脚屋是酿酒作坊和厨房、柴库。

From 朝华午拾 (Morning Flowers Collected at Dusk). Original Chinese: 《朝华之二:书香门第》.