It's not just that "agents have finally arrived." It's that we've been asking the wrong question for three years.

We thought LLMs couldn't land in production because the models weren't smart enough. Then we realized: the real deficit wasn't the "brain." It was the body, the nerves, the hands, the feet, the memory, the discipline, the boundaries, the feedback loops.

LLMs have been eloquent for a long time. They can talk up a storm. What they can't do is act reliably. They're like a brilliant strategist in a glass room — reading maps flawlessly, articulating brilliant strategy, analyzing world affairs with stunning clarity — but ask them to move a box in the warehouse, and they don't even know where the door is.

This is what "impressive in theory, useless in practice" actually means.

It's not that the model lacks knowledge or reasoning. It's that it was never plugged into the real world's execution loop.

For three years, the industry oscillated between excitement and disappointment because we were stunned by "linguistic intelligence" but vastly underestimated the civil engineering required for "action intelligence." LLMs gave us a core that understands, plans, expresses, and generates. But that core is not a product. It's an engine, not a car. You can't take an engine onto the highway.

The real breakthrough wasn't making models slightly bigger. It was someone finally, diligently, bolting on the chassis: file systems, shells, browsers, MCP, cron, permissions, logging, rollbacks, skills, memory, delegation, sandboxes, watchdogs, task queues, failure retrospectives, human approval gates, platform adapters.

None of these are sexy. None would make an investor pound the table shouting "AGI!" But together, they form the skeleton that turns an agent from "talking" to "doing."

That's why Peter — a pure systems engineer — broke through first, while the geniuses at top labs didn't.

Because this was never a "model scientist's problem." It was an operating systems problem.

Model scientists ask: Does the model have stronger reasoning? Bigger context? Higher benchmarks?

Systems engineers ask: What happens when it fails? How do permissions narrow? Where does state persist? How are tools registered? Who restarts the process when it dies? Is there a diff before writing? Confirmation before publishing? How do you find a lost browser tab? How do you switch providers when the API gets expensive? If it works today, how do you reproduce it tomorrow? Can it run on its own while the user sleeps — without running wild?

These are the real problems of agents.

LLMs used to be like a genius with verbal tics: "I can write code for you." "I can analyze your market." "I can manage your knowledge base." "I can handle your publishing."

All true in theory. But on the ground, they die at tiny, dirty places: the cookie isn't in this session, Chrome permissions aren't enabled, React state hasn't updated, the button click silently failed, the file path is wrong, there's no log evidence, tokens are burning, the publishing platform triggered anti-spam, the process didn't come back after a system restart.

These aren't AGI problems. These are plumbing problems.

And the real world is made of plumbing.

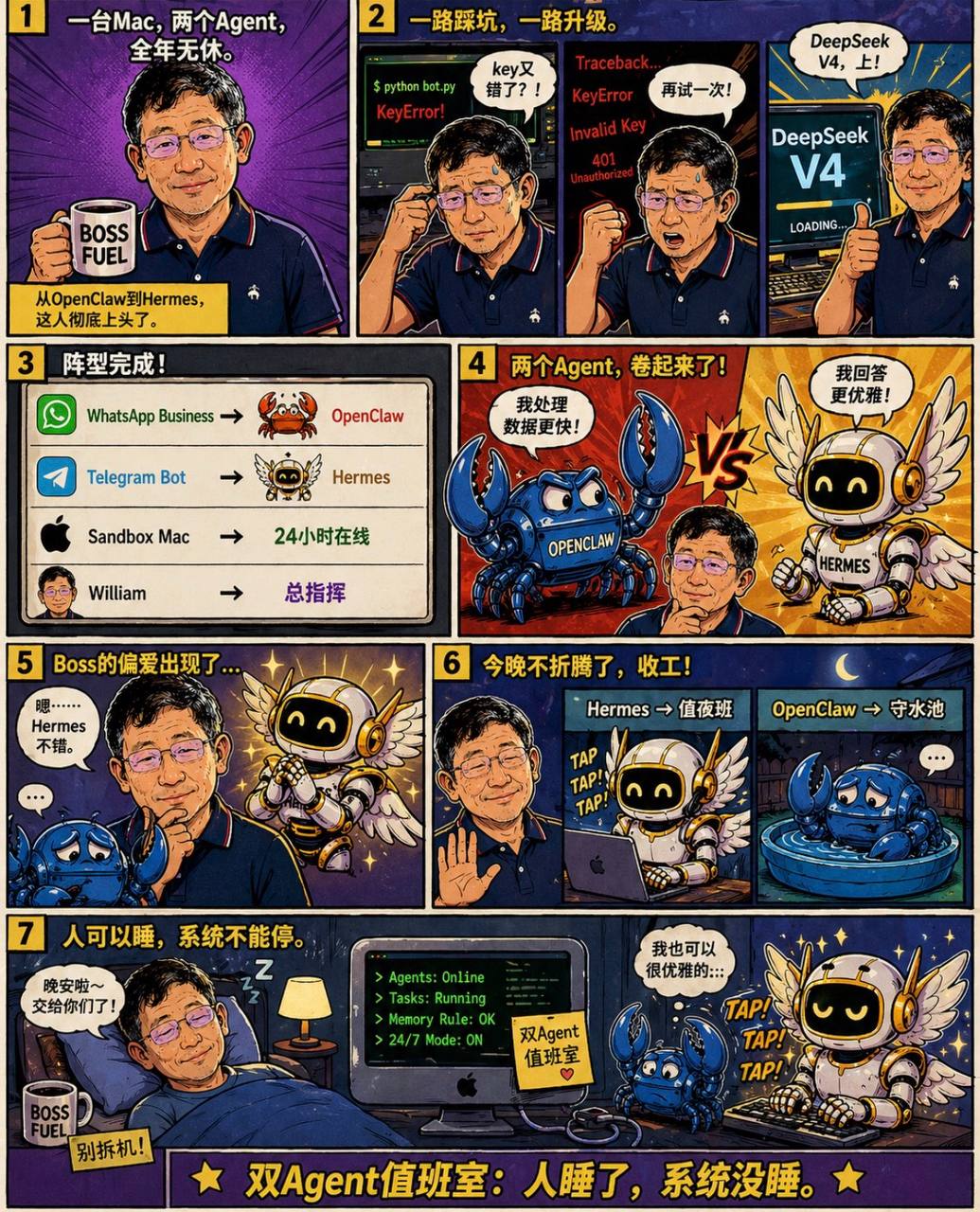

That's why the "explosion" of systems like OpenClaw and Hermes isn't about creating a smarter model. It's about embedding the model in an engineering shell capable of sustained action. That shell looks low-level. But it's what decides life or death.

I'd summarize this technological trajectory in four stages:

Stage 1 — The Wow Period: Humanity discovers for the first time that machines can speak, write, code, explain, translate, summarize like humans. The keyword is "wow."

Stage 2 — The Disappointment Period: Companies start trials and discover that demos are beautiful but production is brutal. LLMs can answer questions but can't own workflows; generate proposals but can't guarantee execution; write code but can't maintain systems; chat endlessly but can't take responsibility for outcomes. The keyword is "then what?"

Stage 3 — The Tooling Period: Function calling, RAG, workflows, browser automation, code interpreters, MCP, agent frameworks gradually emerge. Models start having hands — clumsy, uncoordinated hands that keep hitting walls. The keyword is "it moves, but it's unstable."

Stage 4 — The Systems Engineering Period: The real breakthrough happens here. Not point tools, but complete closed loops: task intake, state persistence, tool orchestration, permission control, log evidence, error recovery, human confirmation, scheduled execution, cross-platform delivery, experience accumulation. The keyword is "operational."

The final judgment is clear: LLMs were never the bottleneck that got cracked. What got cracked was the thick layer of engineering insulation between LLMs and the real world.

Who cracked it? Not the best AGI storytellers. It was the people willing to connect logs, permissions, configs, paths, tools, processes, platforms, and exception handling — layer by dirty layer.

That's why Peter the systems engineer became the man of the hour.

Because a real agent isn't "a smarter mouth." A real agent is "an engineered brain."