The previous four installments of the Token Economics series covered:

What Token is Why Token consumes electricity Why Agents burn Token like crazy Why Token keeps getting cheaper

All about Token production, consumption, and cost.

But the most important question remains unanswered:

Why is the entire world suddenly measuring everything in Token?

Put differently:

Could Token become the "currency" of the future digital economy?

The first four pieces looked at the trees.

Today, Part 5 starts looking at the forest.

Liwei's Two Minutes · Plain Language Part 5 — The New Currency of the AI Era

Many people think Token is just a technical term.

It's starting to look like much more than that.

I even suspect that, looking back decades from now, Token might become a fundamental economic indicator — on par with electricity, steel, and oil.

Why?

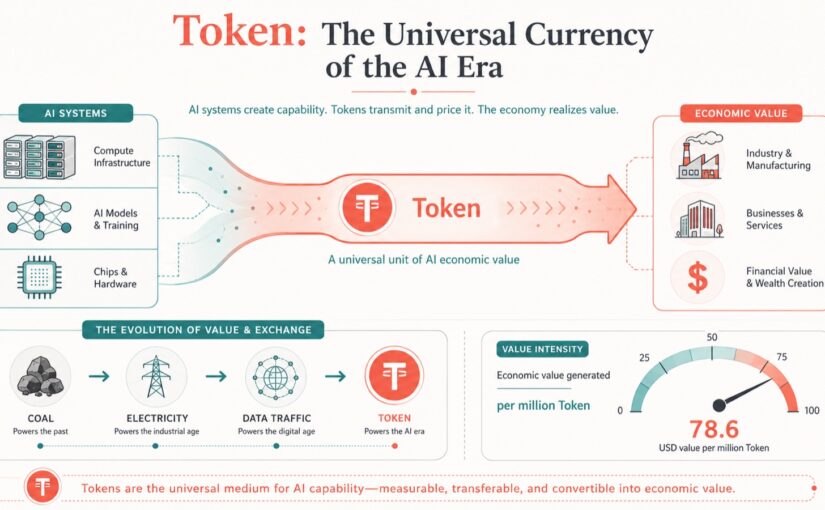

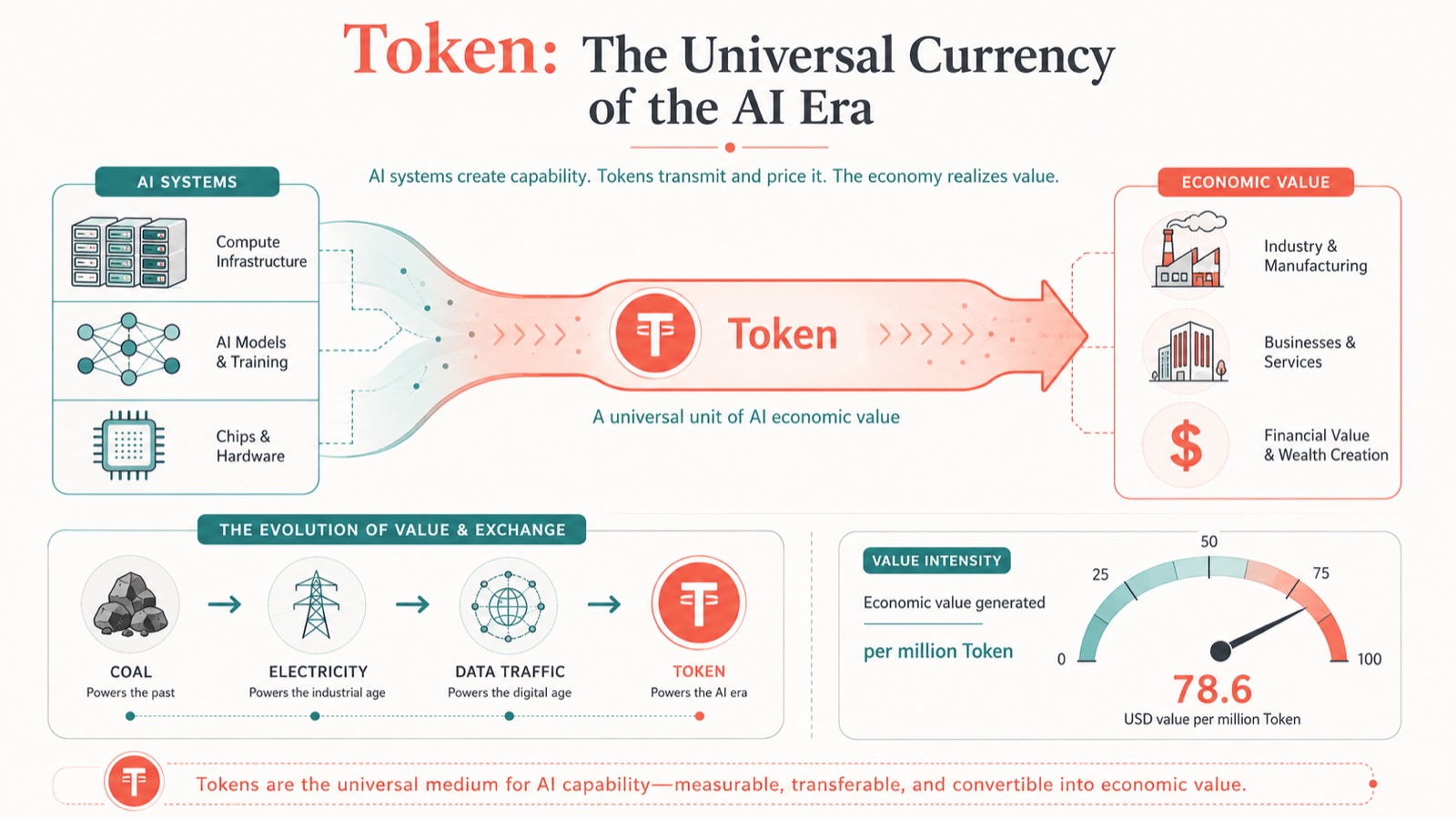

Because throughout human history, every industrial revolution eventually produces a unified unit of measurement.

The steam age ran on coal.

The electric age ran on kilowatt-hours.

The internet age runs on traffic.

And in the AI age, increasingly, everyone is starting to measure things in Token.

The reason is simple.

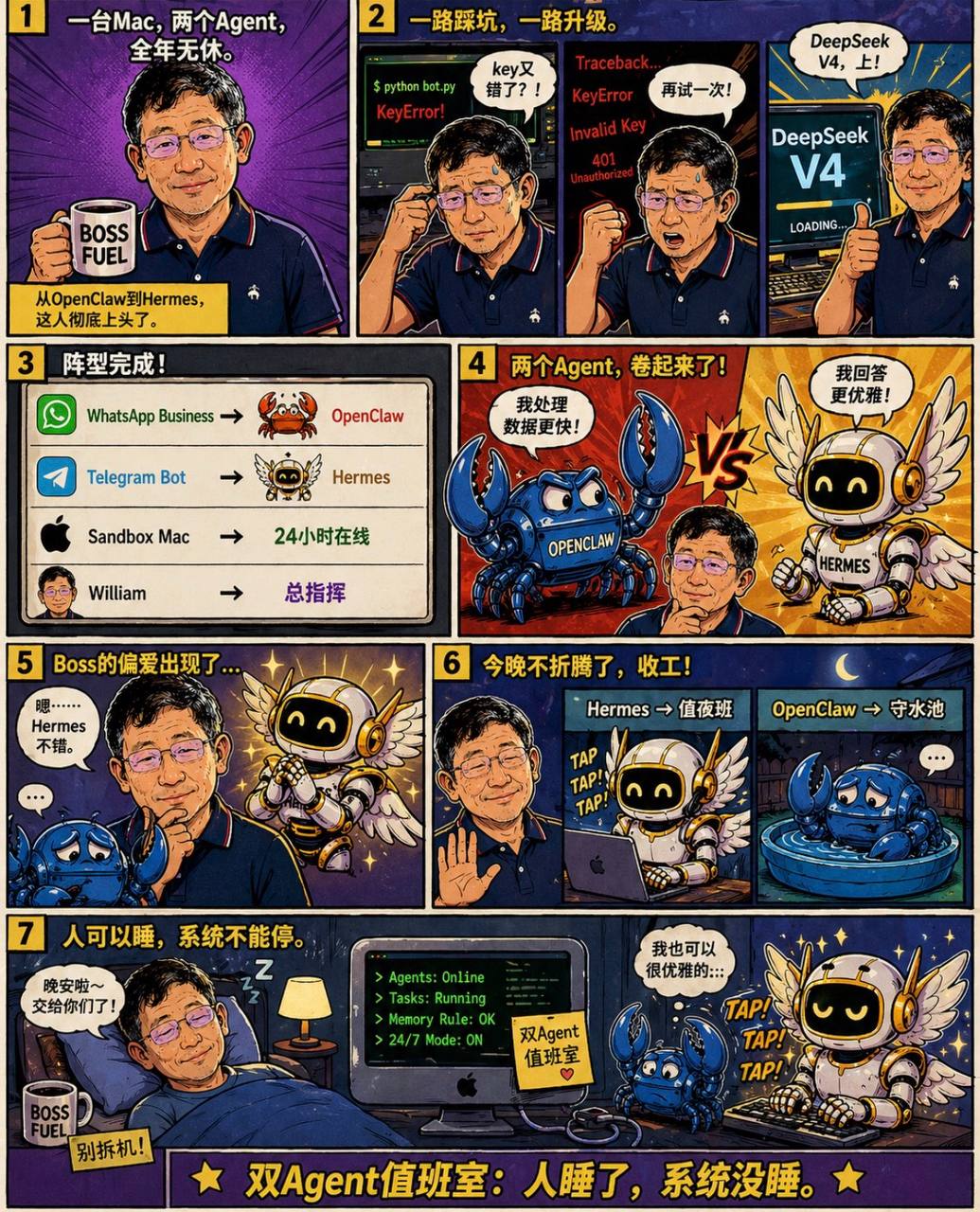

Everything AI does today ultimately comes down to Token.

Writing an article? Burning Token. Writing code? Burning Token. Making a PowerPoint? Burning Token. Generating video? Burning Token. An agent running a project? Burning Token. Even future robots doing physical work — behind the scenes, still burning Token.

And so a strange phenomenon emerges.

We used to buy software — we paid for features.

Now it's starting to feel like: we're buying Token.

Companies used to ask about IT systems: "How much per license?"

Now they're starting to ask: "How much per million Token?"

This is actually very similar to the power grid.

No one cares how many times the generator spun.

Everyone cares about one thing: how much per kilowatt-hour.

The future may be the same.

No one will care how many parameters a model has.

Everyone will care about: how much per million Token. What's the quality. Is it reliable enough.

At that point, Token shifts from a technical concept to an economic one.

And economics has a brutal law: any standardized commodity eventually gets commoditized into a race to the bottom.

Steel went through it. Display panels went through it. Solar panels went through it.

Today's Token is walking the same path.

So while many people are still debating: which model is number one, which model is number two.

The industry is increasingly focused on: who can produce high-quality Token at the lowest cost.

Because real large-scale applications, in the end, all come down to the math.

The boss won't ask: "Did you use the world's number one model?"

The boss will only ask: "How much did we cut costs?"

And so the AI industry starts to look less like a lab and more like manufacturing.

Many people understand AI competition as: a contest of brilliant scientists.

It's increasingly looking like: a contest of national industrial systems.

Who has cheaper electricity. Who has more data centers. Who has a more complete supply chain. Who can drive down Token prices. That's who has the edge.

So a new phenomenon may emerge in future international competition:

Alongside energy exporters and manufacturing exporters, we may see a new category: Token exporters.

Whoever can consistently export cheap, high-quality Token to the world may occupy a pivotal position in the next-generation digital economy.

In the internet age, data flowed.

In the AI age, what really flows may be Token.

And everything happening today might just be the opening act.