After I showed the benchmarking results of SyntaxNet and our rule system based on grammar engineering, many people seem to be surprised by the fact that the rule system beats the newest deep-learning based parser in data quality. I then got asked many questions, one question is:

Q: We know that rules crafted by linguists are good at precision, how about recall?

This question is worth a more in-depth discussion and serious answer because it touches the core of the viability of the "forgotten" school: why is it still there? what does it have to offer? The key is the excellent data quality as advantage of a hand-crafted system, not only for precision, but high recall is achievable as well.

Before we elaborate, here was my quick answer to the above question:

- Unlike precision, recall is not rules' forte, but there are ways to enhance recall;

- To enhance recall without precision compromise, one needs to develop more rules and organize the rules in a hierarchy, and organize grammars in a pipeline, so recall is a function of time;

- To enhance recall with limited compromise in precision, one can fine-tune the rules to loosen conditions.

Let me address these points by presenting the scene of action for this linguistic art in its engineering craftsmanship.

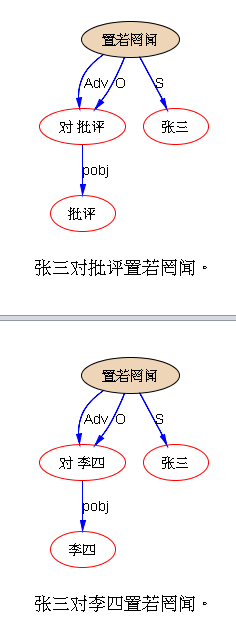

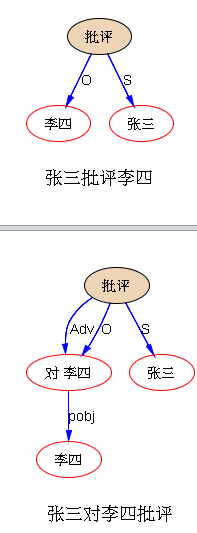

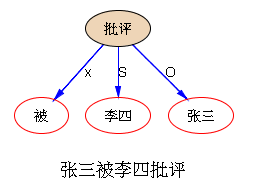

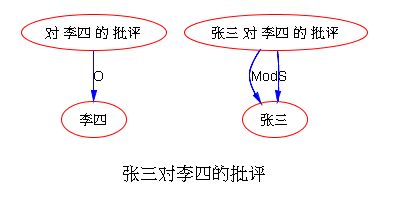

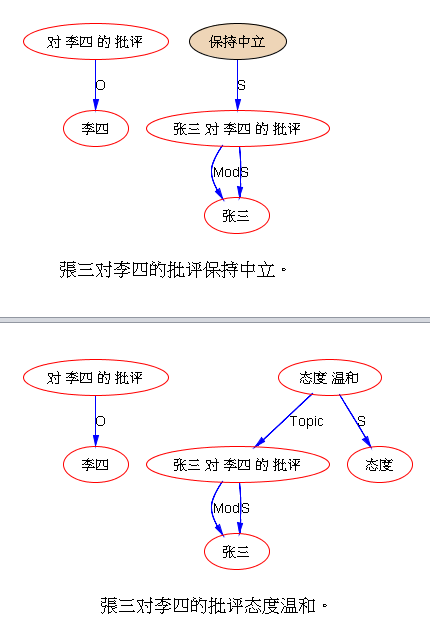

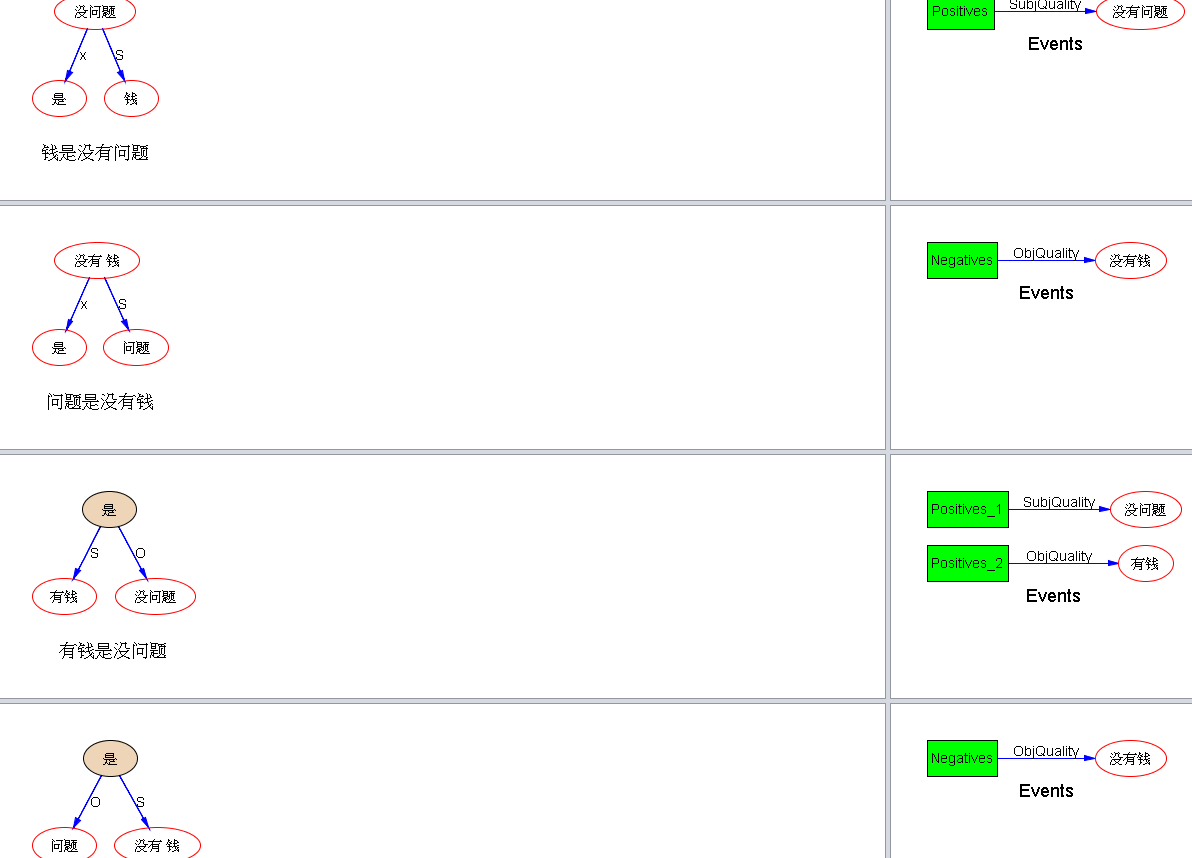

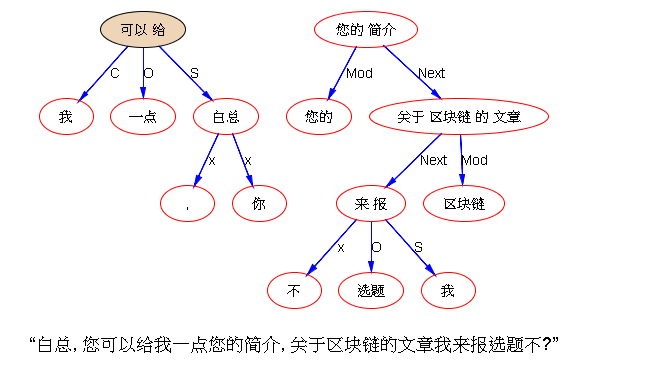

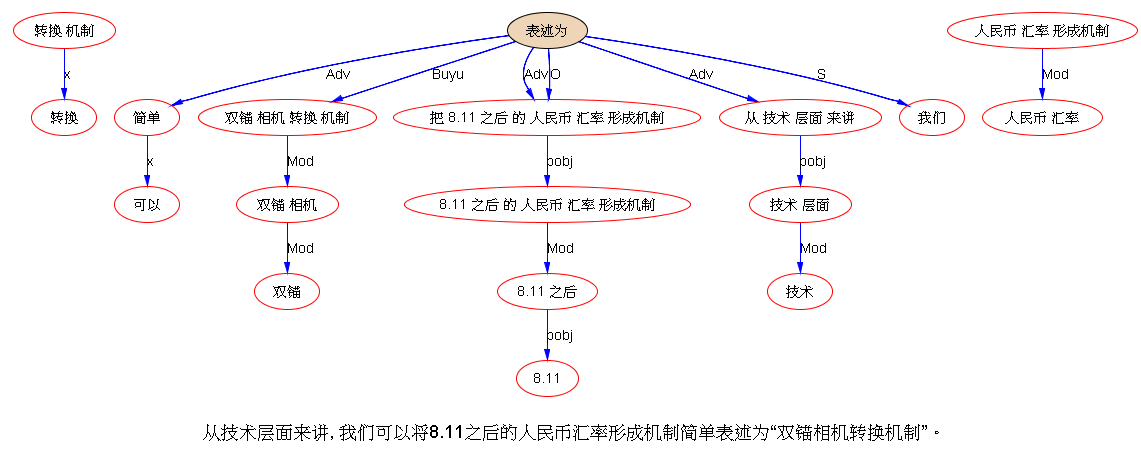

A rule system is based on compiled computational grammars. A grammar is a set of linguistic rules encoded in some formalism. What happens in grammar engineering is not much different from other software engineering projects. As knowledge engineer, a computational linguist codes a rule in a NLP-specific language, based on a development corpus. The development is data-driven, each line of rule code goes through rigid unit tests and then regression tests before it is submitted as part of the updated system. Depending on the design of the architect, there are all types of information available for the linguist developer to use in crafting a rule's conditions, e.g. a rule can check any elements of a pattern by enforcing conditions on (i) word or stem itself (i.e. string literal, in cases of capturing, say, idiomatic expressions), and/or (ii) POS (part-of-speech, such as noun, adjective, verb, preposition), (iii) and/or orthography features (e.g. initial upper case, mixed case, token with digits and dots), and/or (iv) morphology features (e.g. tense, aspect, person, number, case, etc. decoded by a previous morphology module), (v) and/or syntactic features (e.g. verb subcategory features such as intransitive, transitive, ditransitive), (vi) and/or lexical semantic features (e.g. human, animal, furniture, food, school, time, location, color, emotion). There are almost infinite combinations of such conditions that can be enforced in rules' patterns. A linguist's job is to use such conditions to maximize the benefits of capturing the target language phenomena, through a process of trial and error.

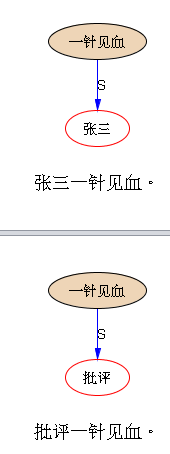

Given the description of grammar engineering as above, what we expect to see in the initial stage of grammar development is a system precision-oriented by nature. Each rule developed is geared towards a target linguistic phenomenon based on the data observed in the development corpus: conditions can be as tight as one wants them to be, ensuring precision. But no single rule or a small set of rules can cover all the phenomena. So the recall is low in the beginning stage. Let us push things to extreme, if a rule system is based on only one grammar consisting of only one rule, it is not difficult to quickly develop a system with 100% precision but very poor recall. But what is good of a system that is precise but without coverage?

So a linguist is trained to generalize. In fact, most linguists are over-trained in school for theorizing and generalization before they get involved in software industrial development. In my own experience in training new linguists into knowledge engineers, I often have to de-train this aspect of their education by enforcing strict procedures of data-driven and regression-free development. As a result, the system will generalize only to the extent allowed to maintain a target precision, say 90% or above.

It is a balancing art. Experienced linguists are better than new graduates. Out of explosive possibilities of conditions, one will only test some most likely combination of conditions based on linguistic knowledge and judgement in order to reach the desired precision with maximized recall of the target phenomena. For a given rule, it is always possible to increase recall at compromise of precision by dropping some conditions or replacing a strict condition by a loose condition (e.g. checking a feature instead of literal, or checking a general feature such as noun instead of a narrow feature such as human). When a rule is fine-tuned with proper conditions for the desired balance of precision and recall, the linguist developer moves on to try to come up with another rule to cover more space of the target phenomena.

So, as the development goes on, and more data from the development corpus are brought to the attention on the developer's radar, more rules are developed to cover more and more phenomena, much like silkworms eating mulberry leaves. This is incremental enhancement fairly typical of software development cycles for new releases. Most of the time, newly developed rules will overlap with existing rules, but their logical OR points to an enlarged conquered territory. It is hard work, but recall gradually, and naturally, picks up with time while maintaining precision until it hits long tail with diminishing returns.

There are two caveats which are worth discussing for people who are curious about this "seasoned" school of grammar engineering.

First, not all rules are equal. A non-toy rule system often provides mechanism to help organize rules in a hierarchy for better quality as well as easier maintenance: after all, a grammar hard to understand and difficult to maintain has little prospect for debugging and incremental enhancement. Typically, a grammar has some general rules at the top which serve as default and cover the majority of phenomena well but make mistakes in the exceptions which are not rare in natural language. As is known to all, naturally language is such a monster that almost no rules are without exceptions. Remember in high school grammar class, our teacher used to teach us grammar rules. For example, one rule says that a bare verb cannot be used as predicate with third person singular subject, which should agree with the predicate in person and number by adding -s to the verb: hence, She leaves instead of *She leave. But soon we found exceptions in sentences like The teacher demanded that she leave. This exception to the original rule only occurs in object clauses following certain main clause verbs such as demand, theoretically labeled by linguists as subjunctive mood. This more restricted rule needs to work with the more general rule to result in a better formulated grammar.

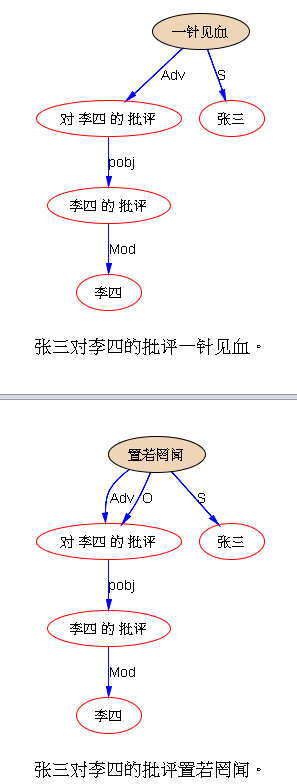

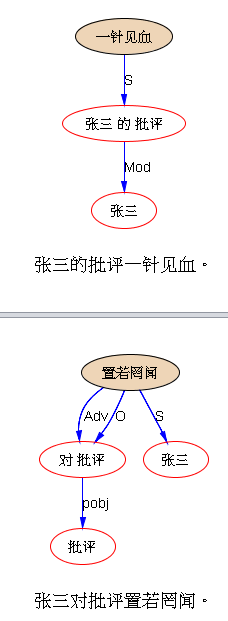

Likewise, in building a computational grammar for automatic parsing or other NLP tasks, we need to handle a spectrum of rules with different degrees of generalizations in achieving good data quality for a balanced precision and recall. Rather than adding more and more restrictions to make a general rule not to overkill the exceptions, it is more elegant and practical to organize the rules in a hierarchy so the general rules are only applied as default after more specific rules are tried, or equivalently, specific rules are applied to overturn or correct the results of general rules. Thus, most real life formalisms are equipped with hierarchy mechanism to help linguists develop computational grammars to model the human linguistic capability in language analysis and understanding.

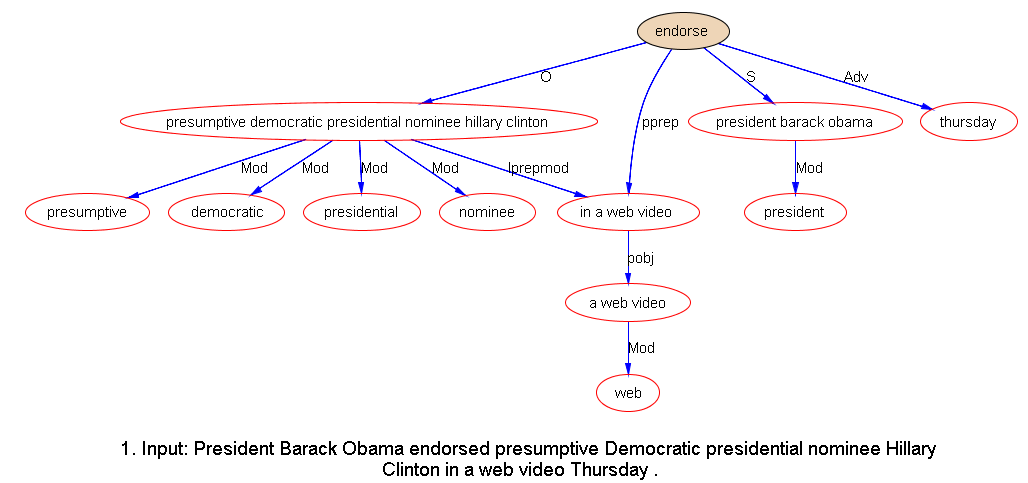

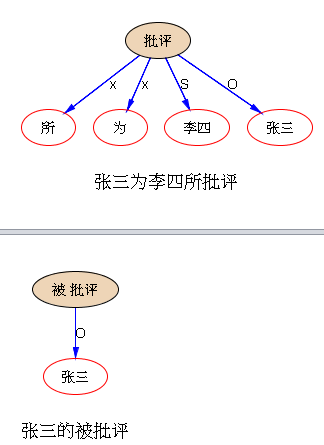

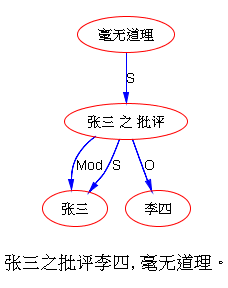

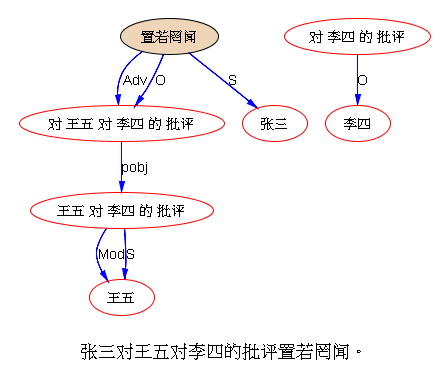

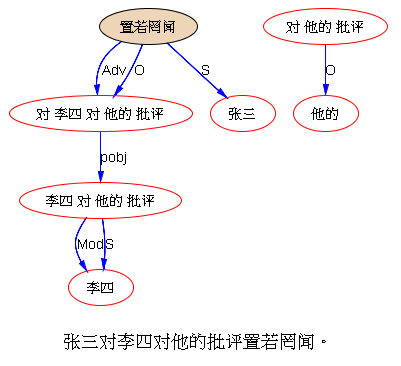

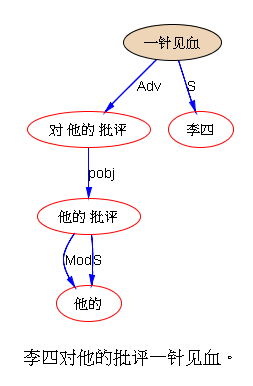

The second point that relates to the topic of recall of a rule system is so significant but often neglected that it cannot be over-emphasized and it calls for a separate writing in itself. I will only present a concise conclusion here. It relates to multiple levels of parsing that can significantly enhance recall for both parsing and parsing-supported NLP applications. In a multi-level rule system, each level is one module of the system, involving a grammar. Lower levels of grammars help build local structures (e.g. basic Noun Phrase), performing shallow parsing. System thus designed are not only good for modularized engineering, but also great for recall because shallow parsing shortens the distance of words that hold syntactic relations (including long distance relations) and lower level linguistic constructions clear the way for generalization by high level rules in covering linguistic phenomena.

In summary, a parser based on grammar engineering can reach very high precision and there are proven effective ways of enhancing its recall. High recall can be achieved if enough time and expertise are invested in its development. In case of parsing, as shown by test results, our seasoned English parser is good at both precision (96% vs. SyntaxNet 94%) and recall (94% vs. SyntaxNet 95%, only 1 percentage point lower than SyntaxNet) in news genre, and with regards to social media, our parser is robust enough to beat SyntaxNet in both precision (89% vs. SyntaxNet 60%) and recall (72% vs. SyntaxNet 70%).

[Related]

Is Google SyntaxNet Really the World’s Most Accurate Parser?

It is untrue that Google SyntaxNet is the “world’s most accurate parser”

R. Srihari, W Li, C. Niu, T. Cornell: InfoXtract: A Customizable Intermediate Level Information Extraction Engine. Journal of Natural Language Engineering, 12(4), 1-37, 2006

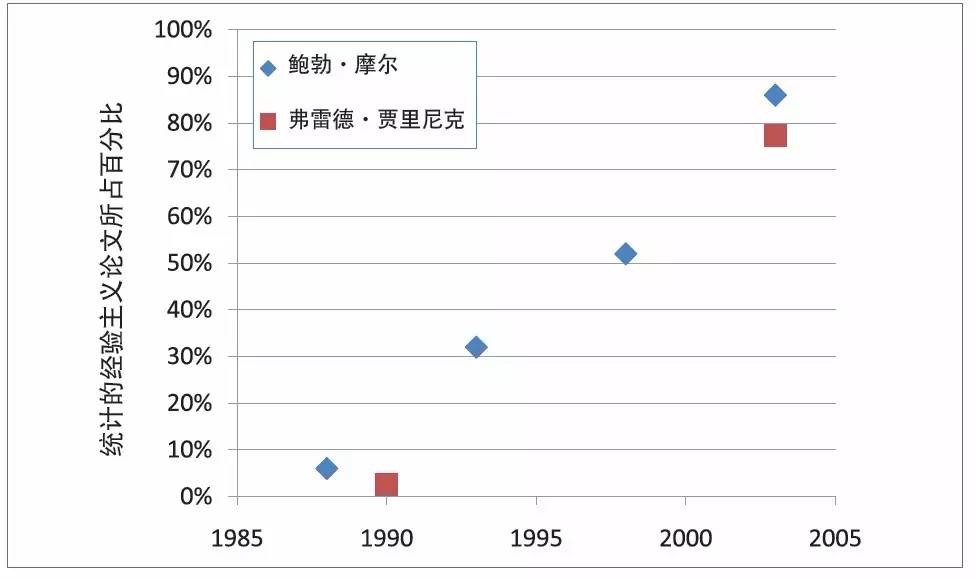

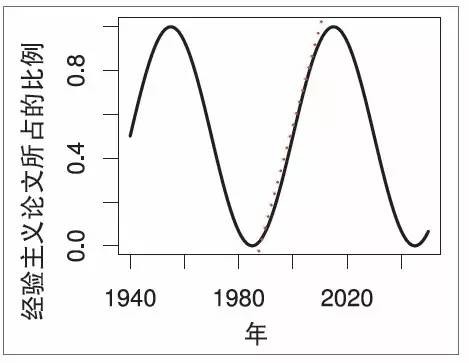

K. Church: A Pendulum Swung Too Far, Linguistics issues in Language Technology, 2011; 6(5)

Pros and Cons of Two Approaches: Machine Learning vs Grammar Engineering

Pride and Prejudice of NLP Main Stream

On Hand-crafted Myth and Knowledge Bottleneck

Domain portability myth in natural language processing

Introduction of Netbase NLP Core Engine

Overview of Natural Language Processing

Dr. Wei Li’s English Blog on NLP